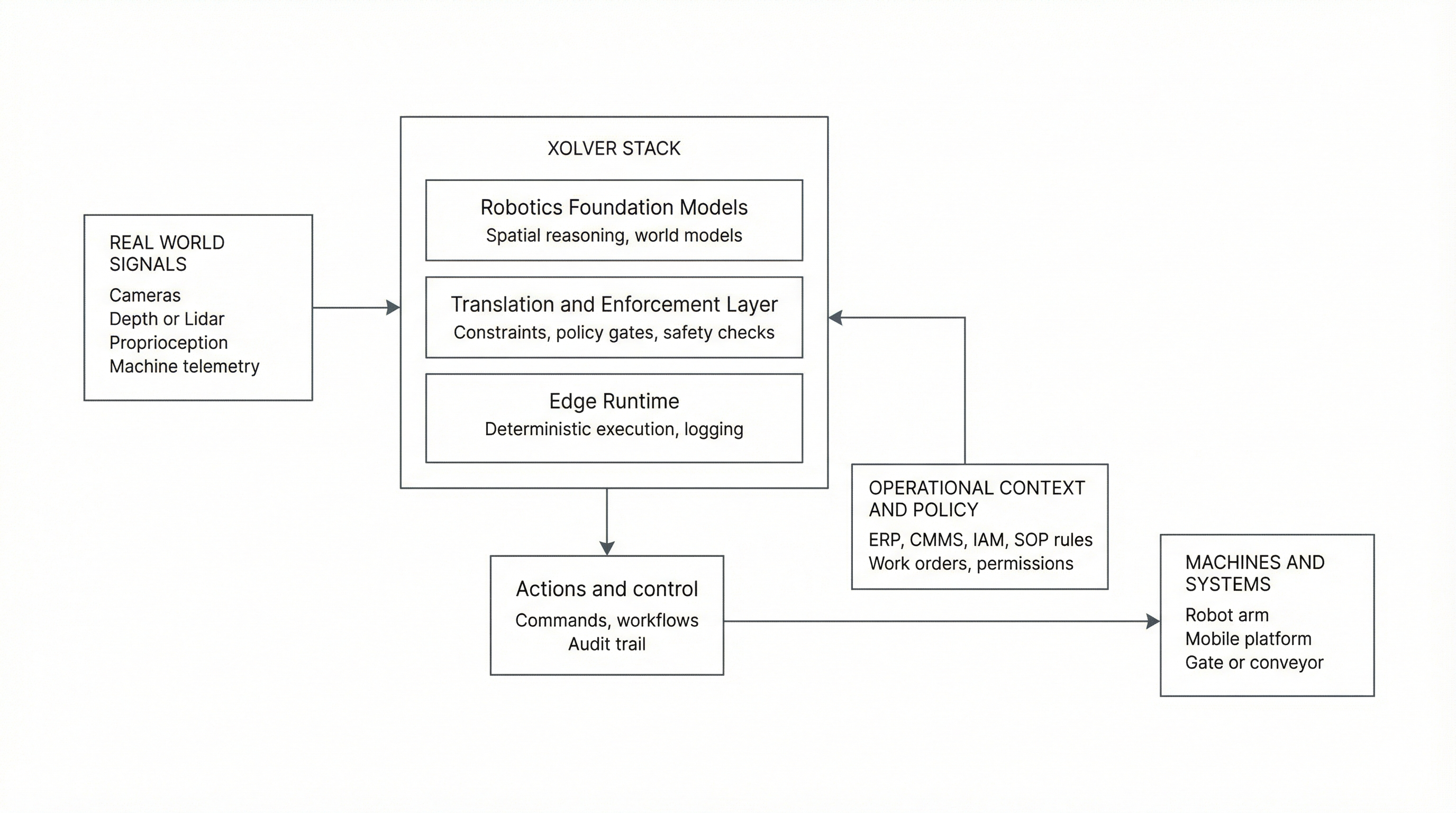

Infrastructure for physical autonomy.

Models propose, enforcement constrains, runtime executes. Xolver separates these roles so perception, validation, and execution do not collapse into a single unchecked loop.

ERP and IAM feed policy and permissions, not perception.

- Models output world state & task intent

- Enforcement outputs allowed actions

- Runtime outputs execution traces